Before utility grids can achieve wide-scale deployment of wind energy, however, they need more efficient wind plants. This requires advancing our fundamental understanding of the flow physics governing wind-plant performance.

ExaWind, a U.S. Department of Energy (DOE) Exascale Computing Project, is tackling this challenge by developing new simulation capabilities to more accurately predict the complex flow physics of wind farms. The project entails a collaboration among the National Renewable Energy Laboratory (NREL), Sandia National Laboratories, Oak Ridge National Laboratory, the University of Texas at Austin, Parallel Geometric Algorithms, and — as of a few months ago — Lawrence Berkeley National Laboratory (Berkeley Lab).

“Our ExaWind challenge problem is to simulate the air flow of nine wind turbines arranged as a three-by-three array inside a space five kilometers by five kilometers on the ground and a kilometer high,” said Shreyas Ananthan, a research software engineer at NREL and lead technical expert on the project. “And we need to run about a hundred seconds of real-time simulation.”

By developing this virtual test bed, the researchers hope to revolutionize the design, operational control, and siting of wind plants, plus facilitate reliable grid integration. And this requires a combination of advanced supercomputers and unique simulation codes.

“We want to know things like the air velocity and air temperature across a big three-dimensional space,” said Ann Almgren, who leads the Center for Computational Sciences and Engineering in Berkeley Lab’s Computational Research Division. “But we care most about what’s happening right at the turbines where things are changing quickly. We want to focus our resources near these turbines, without neglecting what’s going on in the larger space.”

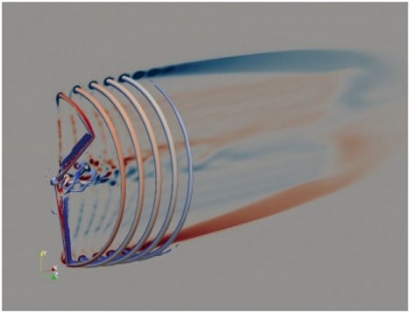

To achieve the desired accuracy, the researchers are solving fluid dynamics equations near the turbines using a computational code called Nalu-Wind, a fully unstructured code that gives users the flexibility to more accurately describe the complex geometries near the turbines, Ananthan explained.

But this flexibility comes at a price. Unstructured mesh calculations have to store information not just about the location of all the mesh points but also about which points are connected to which. Structured meshes, meanwhile, are “logically rectangular,” which makes a lot of operations much simpler and faster.

“Originally, ExaWind planned to use Nalu-Wind everywhere, but coupling Nalu-Wind with a structured grid code may offer a much faster time-to-solution,” Almgren said.

Luckily, Ananthan knew about Berkeley Lab’s AMReX, a C++ software framework that supports block-structured adaptive-mesh algorithms for solving systems of partial differential equations. AMReX supports simulations on a structured mesh hierarchy; at each level the mesh is made up of regular boxes, but the different levels have different spatial resolution.

Ananthan explained they actually want the best of both worlds: unstructured mesh near the turbines and structured mesh elsewhere in the domain. The unstructured mesh and structured mesh have to communicate with each other, so the ExaWind team validated an overset mesh approach with an unstructured mesh near the turbines and a background structured mesh. That’s when they reached out to Almgren to collaborate.

“AMReX allows you to zoom in to get fine resolution in the regions you care about but have coarse resolution everywhere else,” Almgren said. The plan is for ExaWind to use an AMReX-based code (AMR-Wind) to resolve the entire domain except right around the turbines, where the researchers will use Nalu-Wind. AMR-Wind will generate finer and finer cells as they get closer to the turbines, basically matching the Nalu-Wind resolution where the codes meet. Nalu-Wind and AMR-Wind will talk to each other using a coupling code called TIOGA.

Even with this strategy, the team needs high performance computing. Ananthan’s initial performance studies were conducted on up to 1,024 Cori Haswell nodes at Berkeley Lab’s National Energy Research Scientific Computing Center (NERSC) and 49,152 Mira nodes at the Argonne Leadership Computing Facility.

“For the last three years, we’ve been using NERSC’s Cori heavily, as well as NREL’s Peregrine and Eagle,” said Ananthan. Moving forward, they’ll also be using the Summit system at the Oak Ridge Leadership Computing Facility and, ultimately, the Aurora and Frontier exascale supercomputers - all of which feature different types of GPUs: NVIDIA on Summit (and NERSC’s next-generation Perlmutter system), Intel on Aurora, and AMD on Frontier.

NERSC is a DOE Office of Science user facility.

Information provided by NERSC.gov/Jennifer Huber